How ChatGPT Will Help Hack Your Network

ChatGPT has recently gained attention for its impressive results and ease of use in creating human-like text results from simple prompts. While many discussions center around its potential impact on various jobs, it’s crucial to also consider the potential consequences for cybersecurity.

The speed, accuracy, and ease of use provided by ChatGPT can enable malicious actors to more efficiently identify vulnerabilities in targeted organizations. As AI-powered tools like ChatGPT continue to evolve, it’s important for cybersecurity professionals to stay ahead of potential threats. Here are just a few examples of how ChatGPT could be misused by bad actors to gain access and do damage:

- Phishing attacks: A hacker could use ChatGPT to generate highly convincing phishing emails, making it more likely that a target will click on a malicious link or provide sensitive information.

- Password cracking: ChatGPT could be used to generate large numbers of potential passwords, making it more likely that a hacker will be able to guess the correct password for a given account.

- Social engineering: A hacker could use ChatGPT to generate more convincing and personalized social engineering attacks, making it more difficult for a target to detect the attack.

- Network scanning: ChatGPT could be used to generate more sophisticated network scans, making it more likely that a hacker will be able to identify and exploit vulnerabilities in a target network.

- Exploit generation: ChatGPT could be used to generate more sophisticated and targeted exploits, making it more likely that a hacker will be able to successfully exploit a vulnerability.

- Malware generation: ChatGPT can be used to generate malware code that can evade anti-virus software and bypass network security.

- Command and Control: ChatGPT can be used to generate sophisticated command and control systems that can be used to control a botnet or other malicious infrastructure.

ChatGPT and other AI-powered technologies can lower the barrier for success for malicious actors in their cyber attacks as well as help these bad actors more easily create sophisticated methods of attacks that subvert traditional signature-based detection methods. For example using ChatGPT hackers can create more sophisticated and convincing phishing emails, social engineering scams, or malware payloads that would be difficult for traditional security systems to detect in a fraction of the time it would take to do so manually, if at all.

The capabilities and accuracy of these technologies are rapidly improving, with ChatGPT and similar models continuing to experience exponential growth in their abilities. According to MixMode’s Chief Scientist Igor Mezic, who has built some of the world’s most advanced AI in conjunction with organizations like DARPA and the DoD, “AI is advancing at an expanding pace. The latest release of the ChatGPT engine has many useful uses. Unfortunately it also expands the cyberattack surface, exponentially.”

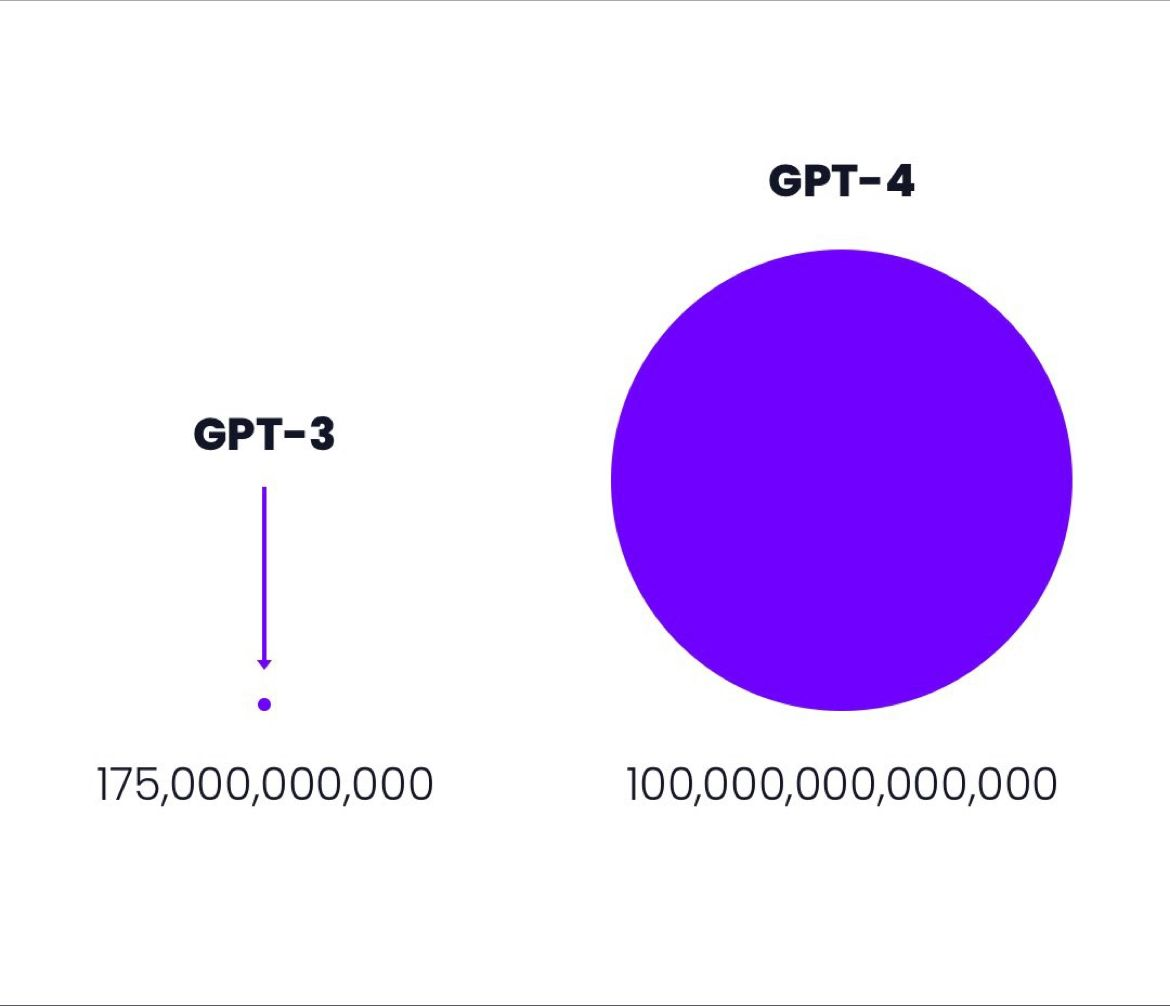

For example, ChatGPT is based on the GPT-3 model, which has 175 billion parameters. The development of GPT-4, with an estimated 100 trillion parameters, is already underway and expected to be released in 2023, representing not only orders of magnitude increase in capabilities but also approaching the number of neural connections in the human brain. This makes it even more crucial for cybersecurity professionals to stay ahead of potential threats.

Cybersecurity Professionals should begin to educate themselves broadly speaking of the different types of AI being built and arm themselves with the right tools in order to handle the increasing sophistication of the cyber attacks that they will face.

*This blog post was written in part with help from ChatGPT.